HEDGE ALGEBRAS WITH LIMITED NUMBER OF HEDGES AND APPLIED TO FUZZY CLASSIFICATION PROBLEMS

Author affiliations

DOI:

https://doi.org/10.15625/0866-708X/48/5/1187Abstract

SUMMARY

In this paper we introduce the Hedge Algebras with a limited number of hedges, called AX2. In the AX2, we consider the g-grade similarity fuzziness interval of a linguistic term x, denote T g(x), which are constructed from two (g+k)-fuzziness intervals satisfy that υ(x) is inside the interval (Definition 2.1, k = l(x) is the length of x). There is a system of k-similarity fuzziness intervals, denote S SS S(k), corresponding to a set of linguistic terms that their length is less than or equal to k, denote X(k) (Definition 2.2). Especially, we prove that the system is always exist and a partition of [0,1], it is constucted by a set of (k+2)-fuzziness intervals (Theorem 2.1), so the AX2

with its partition of k-similarity fuzziness intervals can be used in any real domain (Theorem 2.2).

Fuzzy rule-based systems are widely used for classification problems. There are two main goals in the design of fuzzy rule-based systems: one is the accuracy maximization and the other is the complexity minimization. Various approaches have been proposed to deal with the problem [19, 22, 25, 23]. So in the section 3, we propose an extracting fuzzy rules algorithm

(RFRG) for classification problems base on the partitions S SS S

(k) of domain of attributes. The generated rules, denote S0, of this algorithm content all attributes, i.e. their antecedents have full attributes of the problem, we call them a robust fuzzy rules-set. These rules can improve accuracy upto 100% by choosing particularly k-similarity fuzziness intervals of attributes

(Corollary 2.2). However, this may increase the complexity of the fuzzy rules-set. To overcome this problem, we design an algorithm to optimize the fuzzy rules-set by using genetic algorithms and annealing simulation [1, 5, 7, 8, 26]. The solutions of this optimal problems are encoded in real encoding, which represents rule’s index and attribute’s index in S0 to be selected, then the fitnessfunction is given as a weighting of three objectives: maximize the performance of rules-set, minimizethe number of rulesand minimize the average rule-length. In the section 4, we apply our method to the glassproblem that posted in UCI machine learning repository. The results, in all patterns for training case, are better than [25] in comparision, the best accuracy of our method is 78.04% with 14 fuzzy rules while [Mansoori-07] is 78.5% with 95 fuzzy rules. In the 10-foldsexperiment, the best accuracy on testing patterns of our method is 64.67% with 15.9 average fuzzy rules, comparing with [19] is 62.97% with 28.32 average fuzzy rules. The comparision shows that the accuracy of our method is better than [19] and [25].

Downloads

Downloads

Published

How to Cite

Issue

Section

License

Vietnam Journal of Sciences and Technology (VJST) is an open access and peer-reviewed journal. All academic publications could be made free to read and downloaded for everyone. In addition, articles are published under term of the Creative Commons Attribution-ShareAlike 4.0 International (CC BY-SA) Licence which permits use, distribution and reproduction in any medium, provided the original work is properly cited & ShareAlike terms followed.

Copyright on any research article published in VJST is retained by the respective author(s), without restrictions. Authors grant VAST Journals System a license to publish the article and identify itself as the original publisher. Upon author(s) by giving permission to VJST either via VJST journal portal or other channel to publish their research work in VJST agrees to all the terms and conditions of https://creativecommons.org/licenses/by-sa/4.0/ License and terms & condition set by VJST.

Authors have the responsibility of to secure all necessary copyright permissions for the use of 3rd-party materials in their manuscript.

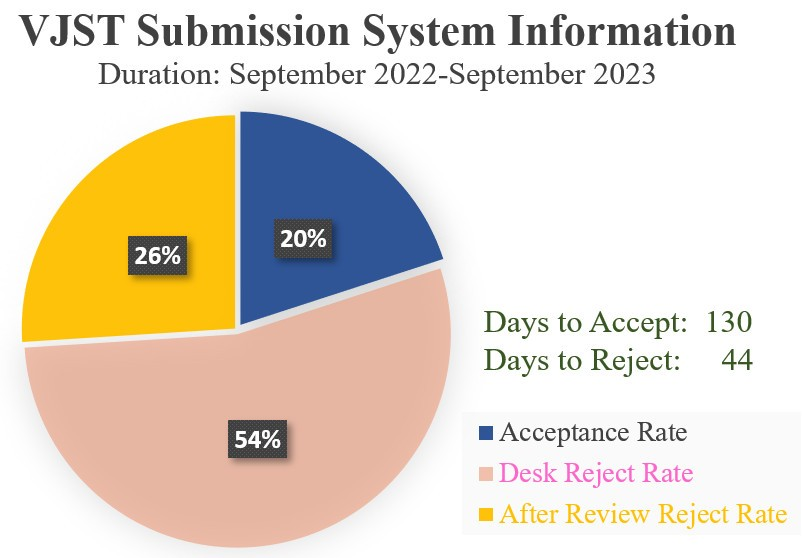

Vietnam Journal of Science and Technology (VJST) is pleased to notice:

Vietnam Journal of Science and Technology (VJST) is pleased to notice: