An empirical evaluation of feature extraction for Vietnamese fruit classification

Author affiliations

DOI:

https://doi.org/10.15625/2525-2518/16299Keywords:

fruit classification, image classification, feature extraction, UIT-VinaFuit20Abstract

In recent years, Vietnamese fruit production has marked significant progress in terms of scale and product structure. Viet Nam enjoys suitable climates for tropic, subtropical fruits, and some temperate fruits. Thanks to the diversified ecology, implementing an automatic classification system has received a significant concern. In this paper, we address the problem of Vietnamese fruit classification. However, the first requirement to explore this problem is the qualified dataset. To this end, we first introduced the UIT-VinaFruit20 dataset, a novel Vietnamese fruit image dataset that includes 63,541 images from 20 types of fruits from three regions of Viet Nam. The diversity in different fruits in distinct areas poses many new challenges. In addition, we further leverage the feature extraction from hand-crafted and deep learning features along with the SVM classification model to effectively classify Vietnamese fruits. The extensive experiments conducted on the UIT-VinaFruit20dataset provide a comprehensive evaluation and insightful analysis. An encouraging empirical success was obtained as the EfficientNetB0 feature achieved the best results of 85.465 % and 86.919 % in terms of Accuracy and Macro F1-score, respectively

Downloads

References

Thuan Trong Nguyen, Thuan Q. Nguyen, Dung Vo, Vi Nguyen, Ngoc Ho, Nguyen D. Vo, Kiet Van Nguyen, and Khang Nguyen. - VinaFood21: A Novel Dataset for Evaluating Vietnamese Food Recognition, Proceedings of the International Conference on Computing and Communication Technologies (RIVF), 2021, pp. 1-6.

Vo Thi Mot, Vo Duy Nguyen, Nguyen Tan Tran Minh Khang - Extract Features and Classification of Chest X-Ray Images, TNU Journal of Science and Technology. 226 (07) (2021). https://doi.org/10.34238/tnu-jst.

Chen X., Zhu Y., Zhou H., Diao L., Wang D. - Chinesefoodnet: A large-scale image dataset for chinese food recognition. arXiv preprint arXiv:1705.02743, 2017.

Kaur P., Sikka K., Wang W., Belongie S., Divakaran. - Foodx-251: A dataset for fine-grained food classification, arXiv preprint arXiv:1907.06167, 2019

Min W., Liu L., Wang Z., Luo Z., Wei X., Wei X., Jiang S. - Isia food-500: A dataset for large-scale food recognition via stacked global-local attention network, Proceedings of the 28th ACM International Conference on Multimedia, 2020, pp. 393-401.

Hou S., Feng Y., Wang Z. - Vegfru: A domain-specific dataset for fine-grained visual categorization, Proceedings of the IEEE International Conference on Computer Vision, 2017, pp. 541-549.

Hung C., Underwood J., Nieto J., Sukkarieh S. - A feature learning based approach for automated fruit yield estimation, Proceedings of the Field and service robotics, 2015, pp. 485-498.

Sen, Woo Chaw and Mirisaee S. H. - A new method for fruits recognition system, Proceedings of the International Conference on Electrical Engineering and Informatics, 2009, pp. 130-134.

Hou L., Wu Q., Sun Q., Yang H., Li P. - Fruit recognition based on convolution neural network, Proceedings of the International Conference on Natural Computation, Fuzzy Systems and Knowledge Discovery (ICNC-FSKD), 2016, pp. 18-22.

Duong L. T., Nguyen P. T., Di Sipio C., Di Ruscio D. - Automated fruit recognition using EfficientNet and MixNet, Computers and Electronics in Agriculture 9 (5) (2020). https://doi.org/10.1016/ j.jmrt.2020.06.079.

Kabir H. M., Abdar M., Jalali S. M., Khosravi A., Atiya A. F., Nahavandi S., Srinivasan D. - Spinalnet: Deep neural network with gradual input, arXiv preprint arXiv:2007.03347, 2020

Dalal N., Triggs B. - Histograms of oriented gradients for human detection, Proceedings of the IEEE computer society conference on computer vision and pattern recognition, 2005, pp.886-893.

Timo Ojala, Matti Pietikainen, and David Harwood - A comparative study of texture measures with classification based on featured distributions. Proceedings of the Pattern recognition, 1996, pp. 51-59.

Simonyan K., Zisserman A. - Very deep convolutional networks for large-scale image recognition, arXiv preprint arXiv:1409.1556, 2014

He K., Zhang X., Ren S., Sun J. - Deep residual learning for image recognition, Proceedings of the IEEE conference on computer vision and pattern recognition, 2016, pp. 770-778.

Huang G., Liu Z., Van Der Maaten L., Weinberger K. Q. - Densely connected convolutional networks. Proceedings of the IEEE conference on computer vision and pattern recognition, 2017, pp. 4700-4708.

Szegedy C., Liu W., Jia Y., Sermanet P., Reed S., Anguelov D., Erhan D., Vanhoucke V., Rabinovich A. - Going deeper with convolutions, Proceedings of the IEEE conference on computer vision and pattern recognition, 2015, pp. 1-9.

Szegedy C., Ioffe S., Vanhoucke V., and Alemi A. - Inception-v4, inception-resnet and the impact of residual connections on learning, Proceedings of the AAAI conference on artificial intelligence, 2017, pp. 4278-4284.

Chollet F. - Xception: Deep learning with depthwise separable convolutions, Proceedings of the IEEE conference on computer vision and pattern recognition, 2017, pp. 1251-1258.

Howard A. G., Zhu M., Chen B., Kalenichenko D., Wang W., Weyand T., Andreetto M., Adam H. - Mobilenets: Efficient convolutional neural networks for mobile vision applications, arXiv preprint arXiv:1704.04861, 2017.

Zoph B., Vasudevan V., Shlens J., Le Q. V. - Learning transferable architectures for scalable image recognition, Proceedings of the IEEE conference on computer vision and pattern recognition, 2018, pp. 8697-8710.

Tan M., Le Q. - Efficientnet: Rethinking model scaling for convolutional neural networks,Proceedings of the International Conference on Machine Learning, 2019, pp. 6105-6114.

Downloads

Published

How to Cite

Issue

Section

License

This work is licensed under a Creative Commons Attribution-ShareAlike 4.0 International License.

Vietnam Journal of Sciences and Technology (VJST) is an open access and peer-reviewed journal. All academic publications could be made free to read and downloaded for everyone. In addition, articles are published under term of the Creative Commons Attribution-ShareAlike 4.0 International (CC BY-SA) Licence which permits use, distribution and reproduction in any medium, provided the original work is properly cited & ShareAlike terms followed.

Copyright on any research article published in VJST is retained by the respective author(s), without restrictions. Authors grant VAST Journals System a license to publish the article and identify itself as the original publisher. Upon author(s) by giving permission to VJST either via VJST journal portal or other channel to publish their research work in VJST agrees to all the terms and conditions of https://creativecommons.org/licenses/by-sa/4.0/ License and terms & condition set by VJST.

Authors have the responsibility of to secure all necessary copyright permissions for the use of 3rd-party materials in their manuscript.

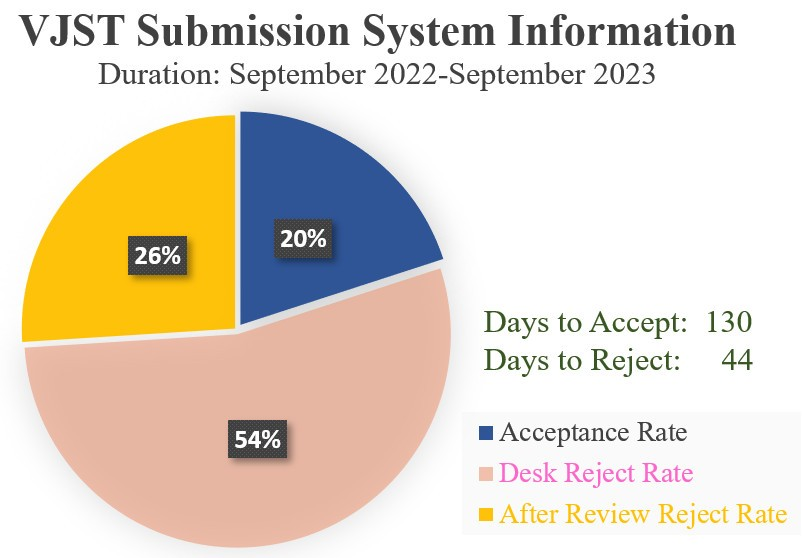

Vietnam Journal of Science and Technology (VJST) is pleased to notice:

Vietnam Journal of Science and Technology (VJST) is pleased to notice: