Adapting knowledge graph embedding for neural machine translation

Author affiliations

DOI:

https://doi.org/10.15625/2525-2518/21463Keywords:

neural machine translation, knowledge graph embedding, graph embeddingAbstract

In the era of deep learning and the rise of Sequence to Sequence architecture, Neural Machine Translation (NMT) has significantly improved in efficiency and performance. However, NMT models still face challenges due to the need for large amounts of training data, particularly for language pairs with insufficient resources, resulting in the corpus sparsity problem. This paper explores the integration of Knowledge Graphs (KGs) into NMT models to enhance the translation of rare and out-of-vocabulary (OOV) words. Specifically, our method, KGE-NMT, leverages structured knowledge from KGs to improve the semantic representation of entities in sentences, thereby enhancing the overall translation quality. Experimental results on English-Vietnamese and English-German language pairs (i.e., IWSLT datasets) show that our KGE-NMT model significantly outperforms baseline models, confirming the benefits of incorporating external knowledge into the machine translation process.

Downloads

References

1. Luong M. T., Pham H., Manning C. D. - Conference on Empirical Methods in Natural Language Processing, (2015) 1412-1421. https://doi.org/10.18653/v1/D15-1166.

2. Gehring J., Auli M., Grangier D., Yarats D., Dauphin Y. - International Conference on Machine Learning, (2017)

3. Vaswani A., Shazeer N., Parmar N., Uszkoreit J., Jones L., Gomez A. N., Kaiser Ł., Polosukhin I. - Advances in Neural Information Processing Systems, (2017)

4. Koehn P., Hoang H. - Conference on Empirical Methods in Natural Language Processing, (2007) 868-876.

5. Wang Y., Wang L., Zeng X., Wong D. F., Chao L. S., Lu Y. - Conference on Computational Natural Language Learning Shared Task, (2014) 83-90. https://doi.org/10.3115/v1/W14-1711.

6. Moussallem D., Ngonga Ngomo A. C., Buitelaar P., Arčan M. - International Conference on Knowledge Capture, (2019) 139-146. https://doi.org/10.1145/3360901.3364423.

7. Ugawa A., Tamura A., Ninomiya T., Takamura H., Okumura M. - International Conference on Computational Linguistics, (2018) 3240-3250.

8. Mota P., Cabarrao V., Farah E. - European Association for Machine Translation Conferences/Workshops, (2022)

9. Li X., Yan J., Zhang J., Zong C. - China Workshop on Machine Translation, (2018) 93-100. https://doi.org/10.1007/978-981-13-3083-4_9.

10. Moussallem D., Arčan M., Ngonga Ngomo A. C., Buitelaar P. - Augmenting Neural Machine Translation with Knowledge Graphs. arXiv preprint, (2019)

11. Lu Y., Zhang J., Zong C. - China Workshop on Machine Translation, (2018) 27-38. https://doi.org/10.1007/978-981-13-3083-4_3.

12. Zhao Y., Xiang L., Zhu J., Zhang J., Zhou Y., Zong C. - International Conference on Computational Linguistics, (2020) 4495-4505. https://doi.org/10.18653/v1/2020.coling-main.397.

13. Shi C., Liu S., Ren S., Feng S., Li M., Zhou M., Sun X., Wang H. - Annual Meeting of the Association for Computational Linguistics, (2016) 2245-2254. https://doi.org/10.18653/v1/P16-1212.

14. Cho K., van Merriënboer B., Gulcehre C., Bahdanau D., Bougares F., Schwenk H., Bengio Y. - Conference on Empirical Methods in Natural Language Processing, (2014) 1724-1734. https://doi.org/10.3115/v1/D14-1179.

15. Hochreiter S., Schmidhuber J. - Long short-term memory. Neural Comput., 9(8) (1997) 1735-1780. https://doi.org/10.1162/neco.1997.9.8.1735.

16. Chorowski J., Bahdanau D., Serdyuk D., Cho K., Bengio Y. - Advances in Neural Information Processing Systems, (2015) 577-585.

17. Suchanek F. M., Kasneci G., Weikum G. - YAGO: A Large Ontology from Wikipedia and WordNet. J. Web Semant., 6(3) (2008) 203-217. https://doi.org/10.1016/j.websem.2008.06.001.

18. Bordes A., Usunier N., Garcia-Duran A., Weston J., Yakhnenko O. - Advances in Neural Information Processing Systems, (2013) 2787-2795.

19. Bizer C., Lehmann J., Kobilarov G., Auer S., Becker C., Cyganiak R., Hellmann S. - DBpedia - A crystallization point for the Web of Data. J. Web Semant., 7(3) (2009) 154-165. https://doi.org/10.1016/j.websem.2009.07.002.

20. Wang Z., Zhang J., Feng J., Chen Z. - AAAI Conference on Artificial Intelligence, (2014) 1112-1119. https://doi.org/10.1609/aaai.v28i1.8870.

21. Lin Y., Liu Z., Sun M., Liu Y., Zhu X. - AAAI Conference on Artificial Intelligence, (2015) 2181-2187. https://doi.org/10.1609/aaai.v29i1.9491.

22. Bojanowski P., Grave E., Joulin A., Mikolov T. - Enriching Word Vectors with Subword Information. Trans. Assoc. Comput. Linguist., 5 (2017) 135-146. https://doi.org/10.1162/tacl_a_00051.

23. Wang Z., Zhang J., Feng J., Chen Z. - Conference on Empirical Methods in Natural Language Processing, (2014) 1591-1601. https://doi.org/10.3115/v1/D14-1167.

24. Bordes A., Usunier N., Chopra S., Weston J. - Large-scale Simple Question Answering with Memory Networks. arXiv preprint, (2015)

25. Zhang F., Yuan N. J., Lian D., Xie X., Ma W. Y. - ACM SIGKDD International Conference on Knowledge Discovery and Data Mining, (2016) 353-362. https://doi.org/10.1145/2939672.2939673.

26. Hoang V. C. D., Koehn P., Haffari G., Cohn T. - Workshop on Neural Machine Translation and Generation, (2018) 18-24. https://doi.org/10.18653/v1/W18-2703.

27. Sennrich R., Haddow B., Birch A. - Conference on Machine Translation, (2016) 83-91. https://doi.org/10.18653/v1/W16-2209.

28. Gu J., Lu Z., Li H., Li V. O. K. - Annual Meeting of the Association for Computational Linguistics, (2016) 1631-1640. https://doi.org/10.18653/v1/P16-1154.

29. Miculicich L., Ram D., Pappas N., Henderson J. - Conference on Empirical Methods in Natural Language Processing, (2018) 2947-2954. https://doi.org/10.18653/v1/D18-1325.

30. Cettolo M., Niehues J., Stüker S., Bentivogli L., Cattoni R., Federico M. - International Workshop on Spoken Language Translation, (2015).

31. Kingma D. P., Ba J. - Adam: A method for stochastic optimization. arXiv preprint, (2014).

Downloads

Published

How to Cite

Issue

Section

License

This work is licensed under a Creative Commons Attribution-ShareAlike 4.0 International License.

Vietnam Journal of Sciences and Technology (VJST) is an open access and peer-reviewed journal. All academic publications could be made free to read and downloaded for everyone. In addition, articles are published under term of the Creative Commons Attribution-ShareAlike 4.0 International (CC BY-SA) Licence which permits use, distribution and reproduction in any medium, provided the original work is properly cited & ShareAlike terms followed.

Copyright on any research article published in VJST is retained by the respective author(s), without restrictions. Authors grant VAST Journals System a license to publish the article and identify itself as the original publisher. Upon author(s) by giving permission to VJST either via VJST journal portal or other channel to publish their research work in VJST agrees to all the terms and conditions of https://creativecommons.org/licenses/by-sa/4.0/ License and terms & condition set by VJST.

Authors have the responsibility of to secure all necessary copyright permissions for the use of 3rd-party materials in their manuscript.

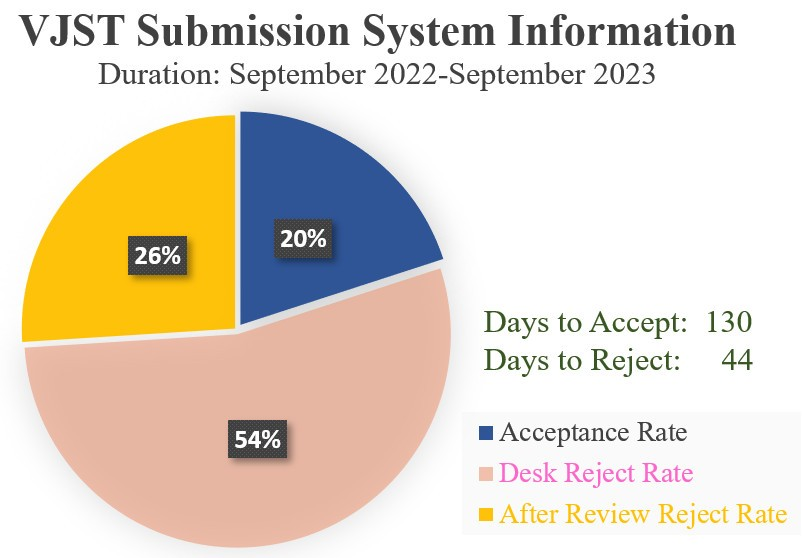

Vietnam Journal of Science and Technology (VJST) is pleased to notice:

Vietnam Journal of Science and Technology (VJST) is pleased to notice: